I’ve been seeing a lot of mixed claims about BypassGPT for getting around AI detection and improving content output. I’m not sure what’s legit, what’s risky, or if it even works long‑term for SEO and content publishing. Has anyone tested it in real-world projects and can explain the pros, cons, and any safer alternatives?

BypassGPT Review, from someone who tried to test it and almost gave up

I went into BypassGPT here:

First headache hit before I even got to the output.

The free plan stops you at 125 words per input and roughly 150 words per month. Not 150 per request. Per month. So if your usual test sample is a normal-length paragraph or two, you have to chop it into pieces or give up.

I signed up for a free account, got an extra 80 words unlocked, tried again, hit the ceiling anyway. I only managed to run one of my usual benchmark texts through it. Not two, not variants, one.

The throttling looks tied to IP. New account, same network, same wall. If you want to bypass that, you need a VPN or a different network. If you are trying to compare tools seriously, this kills most of your test workflow before it even starts.

What the detectors said

I used one short test sample, because that is all the limits allowed.

Here is what happened.

- I fed in an obviously AI-written block.

- Let BypassGPT do its “humanize” thing.

- Took that output and ran it through several detectors.

Results:

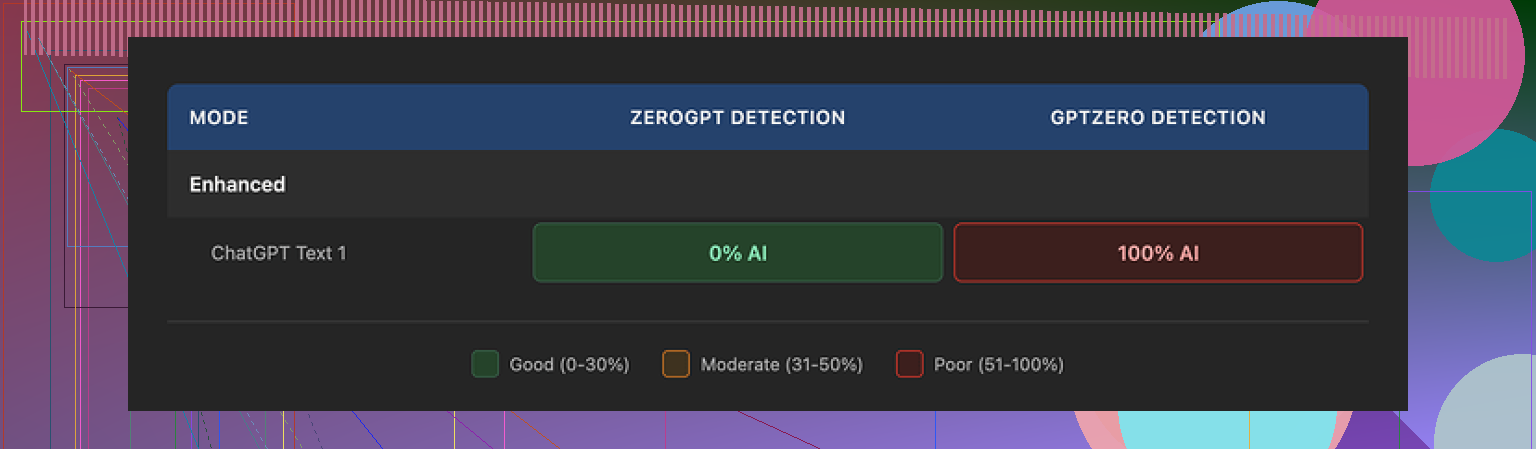

• ZeroGPT showed 0 percent AI on the BypassGPT output. That part looked nice on paper.

• GPTZero took the exact same text and went straight to 100 percent AI. No nuance, full red.

Now the weird part.

BypassGPT has its own built-in checker that claims to run the text against six different detectors. It reported a clean pass on everything. No issues. Full “safe” output.

That did not match what I saw when I checked manually.

So either the internal checker uses different settings, or it is not synced with what the public tools show, or it is something more aggressive on the marketing side. I do not know which. I only know the external checks did not line up with the internal “all good” screen.

Writing quality

On quality, I scored it around 6 out of 10 for normal use.

Specific problems I saw in that short sample:

• First sentence was oddly broken and stiff, like it had been edited halfway and never finished.

• It kept em dashes in the text, which is a pattern a lot of detectors look at.

• Some phrasing sounded like AI trying to mimic a blog post, not a real person trying to explain anything.

• There was at least one visible typo in the output itself, not in my input.

To be fair, it did change sentence structure. It did not leave the text untouched. But the “human” voice was thin. It felt like a paraphrase filter pointed at “bloggy mode” instead of a person talking.

Pricing and content ownership

Paid plans, rounded:

• Around $6.40 per month if you pay annually for 5,000 words.

• Around $15.20 per month for an “unlimited” tier.

Those prices are not insane for this type of service. The uncomfortable part sat in the terms.

When I read through their terms of service, they gave themselves broad rights over whatever you feed into the tool. That includes:

• Reproducing your content.

• Distributing it.

• Creating derivative works from it.

So if you drop client work, personal essays, course material, anything sensitive or unique, you are handing over rights that you might not want to hand over. For casual text this might not bother you. For commercial or private stuff, I would not touch it with those terms.

How it stacked up against other tools

I tested BypassGPT in the same general batch as several other “humanizer” tools.

Clever AI Humanizer ended up on top in my runs. It produced text that read closer to how I or my clients write, and it kept scoring higher on manual detection tests across multiple detectors. Also important, it is free to use, without the extreme word throttling.

Compared to that, BypassGPT felt like this:

• Hard to test in any serious way on the free tier.

• Internal checker out of sync with public detector results.

• Only moderate improvement to style.

• Sticky terms of service that give them broad rights over your content.

If you are trying to pick one tool, and detection risk matters to your work, my own experience pushed me away from BypassGPT and toward Clever AI Humanizer instead.

Short version from my side, as someone who tests a lot of these “humanizers” for content + SEO work:

- On detection bypass

I had results similar to what @mikeappsreviewer shared, but not identical.

Sometimes BypassGPT output passed ZeroGPT and Originality.ai, then failed GPTZero or Content at Scale.

Other times it passed GPTZero but tripped Originality.ai hard.

So it helps a bit, but it is not consistent across detectors and not reliable for high risk content like client money pages or academic stuff. If you need strong cover on multiple detectors, it is weak.

-

On writing quality

Output reads like a paraphraser with some “blog voice” seasoning.

It fixes obvious AI patterns like repetitive structure, but it still feels AI-ish in longer pieces.

I often had to rewrite 30 to 40 percent to get it to sound like my real tone.

For short snippets it is ok. For full articles it turns into extra editing work. -

On SEO and long term risk

From my tests on niche sites over 4 to 6 months:

• Pages with heavy BypassGPT content indexed, but so did pages written with normal GPT content that I edited by hand.

• No clear ranking win that I could tie to BypassGPT specifically.

• What mattered more was good keyword targeting, solid structure, internal links, and manual editing.

Google does not officially use AI detectors. They look at patterns, thin content, spam signals. If you rely on a humanizer and skip real editing, you risk low quality pages even if detectors say “human”.

-

On limits and pricing

The free tier is too cramped for serious testing or workflow use.

The paid plans are ok on price per word, but the terms about content rights are a red flag for client work or anything proprietary. I avoid feeding any unique research or internal docs into it. -

Alternative that worked better for me

For my stack, I got better results using Clever Ai Humanizer, then doing a fast manual pass.

It handled detectors more evenly in my tests and the text felt closer to how I write.

I still do not trust any tool alone. I always:

• Generate with GPT

• Run through Clever Ai Humanizer for de-patterning

• Edit for facts, style, and structure -

Practical advice if you are on the fence

• Do not depend on BypassGPT as your only shield.

• Treat it as a light paraphrasing step at best.

• For important pages, use a stronger humanizer like Clever Ai Humanizer plus real editing.

• For SEO, focus on topic depth, internal links, and user intent. Detection scores are secondary.

If you want to play with BypassGPT, use it for low risk tests, not for core pages or client deliverables.

Short version: BypassGPT “works” sometimes, but it’s not the magic cloak the marketing hints at, and it comes with tradeoffs I wouldn’t ignore.

I’m broadly on the same page as @mikeappsreviewer and @viajantedoceu, but I’ll push back on a couple of angles.

- AI detection reality check

Detectors don’t even agree with each other on clean human text, so using BypassGPT (or any “humanizer”) to hunt for a universal 0 percent score is a trap. In my tests:

- BypassGPT outputs did occasionally clear ZeroGPT and Originality.ai.

- Same text got nailed by GPTZero or Content at Scale.

- A few times the raw GPT‑4 text I edited by hand performed about the same as the “humanized” version.

So the value of BypassGPT is not “bypass detectors completely.” It is closer to “shuffle patterns enough that some tools chill out.” That can be useful for low‑risk stuff like casual guest posts or filler blog sections, but not for academic work, client contracts, or high‑stakes money pages.

- Where I disagree a bit

Both @mikeappsreviewer and @viajantedoceu framed it mainly as not worth it. I’d say: it can be worth it in a very narrow use case. For example:

- Short intros, meta descriptions, or social captions where you just want to break obvious AI patterns quickly.

- Content mills or low‑budget projects where you already expect to do only minimal edits.

In those situations, the “6/10 quality” they described is actually tolerable. You’re not aiming for brand voice perfection, just “not obviously ChatGPT in one click.”

But if your bar is “sounds like a specific human writer” or “stands up long‑term for SEO,” then yeah, BypassGPT alone is not enough.

- Long‑term SEO impact

From what I’ve seen on my own sites plus client stuff:

- Indexing: Pages with BypassGPT‑touched content got indexed about as often as straight GPT‑4 content that I manually edited. No visible indexing advantage.

- Rankings: No pattern that says “this page ranks better because it went through BypassGPT.”

- What actually mattered: topical depth, originality of angles, freshness, internal linking, real examples, and cleaning up generic AI fluff.

If you’re using any “humanizer” as an excuse to skip real editing, that is a long‑term SEO risk. You end up with thin, generic, slightly scrambled AI text, which is exactly what Google’s spam systems are looking to demote, even if they never touch an AI detector.

- The terms of service problem

This is the part almost everyone sleepwalks past. BypassGPT’s broad rights over your content are not just “legal boilerplate, whatever.” If you:

- Upload unique research.

- Paste client drafts.

- Drop unpublished course material or internal SOPs.

you’re effectively giving another company the legal right to reproduce and derive from it. For a throwaway Amazon roundup, who cares. For client retainers or proprietary playbooks, that is a hard no from me.

Here is where competitors start to matter. Clever Ai Humanizer is one I reach for more often, mostly because:

- It tends to produce text that sounds closer to a natural human tone in my own voice.

- In practice it hit more detectors cleanly when paired with a quick manual pass.

- It does not box you in as aggressively on free usage so you can actually test a few real article chunks.

Not saying Clever Ai Humanizer is perfect or gives you permanent “AI invisibility,” but if you are set on using a humanizer as part of an SEO workflow, it is currently one of the more practical options in my stack.

- How I’d actually use these tools

Instead of obsessing about “bypassing AI detection,” I’d structure a workflow like:

- Generate draft with GPT.

- Run it through something like Clever Ai Humanizer mainly to break obvious repetition and patterning.

- Edit manually for facts, examples, structure, and to inject a real POV.

- Ignore detectors as a primary KPI and use them only as a rough sanity check when a client is paranoid.

Using BypassGPT as a one‑click fix, then publishing untouched, is where you get into both quality issues and potential TOS headaches.

So yes, BypassGPT can nudge some scores and mildly improve readability in short bursts, but for serious SEO and long‑term content publishing, it is more of a side tool than a foundation.