I’ve been considering using WriteHuman AI for content and copy, but I’ve seen mixed opinions online and I’m worried about quality, originality, and pricing. Can anyone share real experiences with WriteHuman AI, including what it does well, where it falls short, and whether it’s worth paying for long term?

WriteHuman AI review from someone who burned a few hours on it

I tried WriteHuman after seeing it name-drop GPTZero in its marketing. That got my attention, so I pushed it pretty hard with some test samples.

Here is what happened.

First round of tests

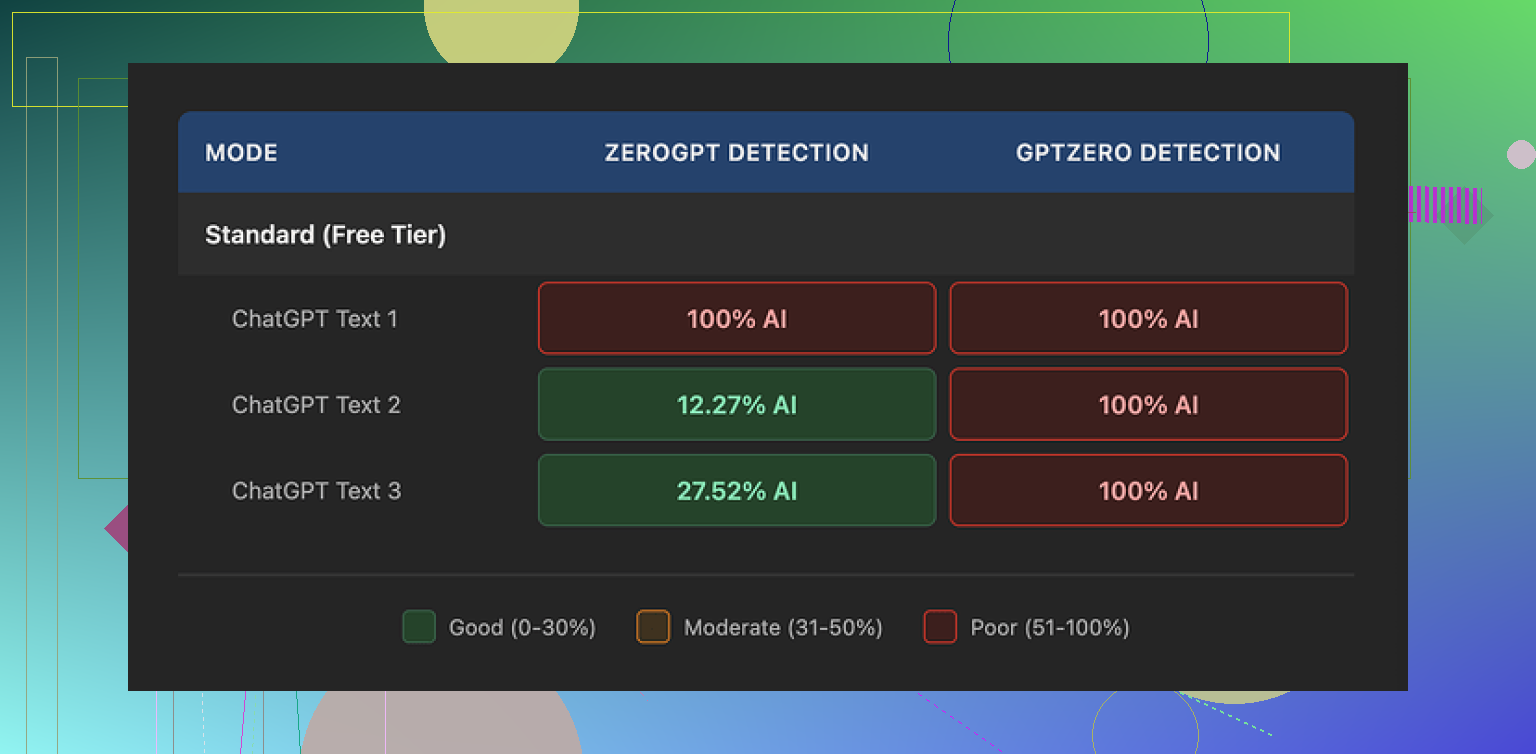

Their page leans on this idea of being tested against detectors, so I started with the obvious:

I took three different AI-generated samples and ran them through WriteHuman at default settings.

Then I sent the humanized versions straight into GPTZero.

All three outputs came back as 100% AI on GPTZero.

Not 60, not 80. Full 100 on every sample.

I thought I messed up the copy paste, so I repeated the tests with fresh prompts and fresh outputs. Same result.

Tried ZeroGPT too

Since GPTZero was a brick wall, I tried ZeroGPT to see if the behavior was any different.

Results:

• Sample 1: 100% AI

• Sample 2: around 12% AI

• Sample 3: around 28% AI

So ZeroGPT flagged one as fully AI, shrugged at another, and sat in the middle on the third.

It looked more random than reliable. I would not bet a grade or a job application on numbers that jump like that.

The writing quality

The text it produced felt strange in places.

A few things stood out:

• Tone swings

Some paragraphs sounded formal and stiff, then the next one sounded casual and slightly off. It read like multiple people with different writing habits stitched together one after another.

• A visible typo

One of my outputs had “shfits” instead of “shifts”. I did not feed it a typo, it came out of WriteHuman that way.

On one hand, that sort of flaw might lower detector scores in some edge cases. On the other hand, if you hand this to a manager or teacher, they will notice the weirdness before any detector does.

If you care about publishing, or sending this to a client, you would need another editing pass on top of what WriteHuman gives you.

Screenshot from testing

Here is one of the passes I ran during those tests:

Pricing and limits

Their pricing felt stiff for what I saw.

• Entry paid tier: 12 dollars per month on annual billing

• Included: 80 requests on the Basic plan

• Extra: paid plans unlock an “Enhanced Model” and more tone choices

There is no free tier with full access, so you have to spend first, then see if it works for your specific detector mix.

Two policy details matter here:

- They say in their own terms they do not guarantee bypass of any AI detector. So even if it fails on your target detector every time, they are still within their rules.

- No refunds. If it fails for your use, you eat the cost.

If your goal is “I need this to clear detector X”, you are paying for a tool that openly says it might not do the thing you bought it for, and you do not get your money back.

Data and training

Another red flag for some people.

Their terms say anything you submit can be used for AI training. So your input text is not treated as off-limits training data.

If you deal with:

• client documents

• internal company docs

• academic work you do not want ending up as training data somewhere

then the only safe move is to avoid feeding it in at all.

There is no setting to opt out. Your only real opt out is not using the service.

How it compares to Clever AI Humanizer

Same day, same laptop, I tried Clever AI Humanizer from here:

Using the same type of prompts and the same detectors, I got better performance on detectors with Clever. It consistently scored lower on AI probability than the WriteHuman outputs, with no paywall for basic use during my tests.

So for my own needs, Clever made more sense:

• better detector scores in practice

• no monthly bill blocking experiments

Who WriteHuman fits and who it does not

From my time with it:

You might tolerate WriteHuman if:

• you do not mind some odd tone shifts and you plan to rewrite the output anyway

• you are ok with your inputs being used as training data

• you are testing across several detectors and you only need small improvements here and there

You probably avoid it if:

• you target GPTZero specifically, since my samples all hit 100% AI after humanization

• you need clean, stable text for clients, resumes, or graded work

• you care about refunds or want a guarantee tied to detector performance

• you handle anything sensitive or under NDA

If you still want to try it, I would suggest:

- Start with short, non-sensitive text.

- Run the original and the humanized version through the exact detector your teacher, employer, or platform uses.

- Only upgrade if you see clear improvement on that specific tool, not on random online checkers.

For me, once I saw GPTZero stick to 100% on three different samples, I moved on and stayed with Clever AI Humanizer for this use case.

I used WriteHuman for about a week on client blog posts and some ad copy. Mixed bag.

Quality

For short copy, like 100–200 word blurbs, it did ok. The tone still felt AI-ish in places, but not as stiff as raw GPT output. For longer posts, the voice drifted a lot. First few paragraphs sounded like a blog, then it shifted into textbook mode. I had to rewrite about 40–50% to make it sound like one person.

Originality

I ran my “before” and “after” text through a couple of plagiarism tools. No direct copying issues, so originality was fine on that front. On AI detectors, my experience was a bit different from @mikeappsreviewer. GPTZero still flagged some as high AI, but not always 100%. I saw scores drop from high 90s to 60–70 range on a few pieces. On others, there was no improvement. So result are inconsistent.

Detectors

If your main goal is “must pass GPTZero or similar”, I would not rely on it. Treat it as a style rewriter, not a magic AI eraser. Test on the exact detector your teacher, client, or company uses, every time.

Pricing

The 80 requests on the basic paid plan disappear fast if you work with long-form content. There is no refund, so if it does not work with your detector mix, you eat the cost. For occasional small tasks it might be ok. For heavy content work, the value feels weak.

Data and privacy

Big one for me. Their terms say they can use your inputs for AI training. For school essays, client docs, internal stuff, I would not send it through. That alone pushed me to stop using it for agency work.

Workflow advice if you still want to try it

- Only send non-sensitive text.

- Keep paragraphs short, then glue them together yourself. It seemed to do slightly better on small chunks.

- Rewrite the first and last paragraph by hand. Detectors often look tighter at intros and conclusions.

- Always run your own grammar and style check after. I also saw a few typos and weird phrasing, similar to the “shfits” issue mentioned before.

Comparison

I later tried Clever AI Humanizer on the same texts. For my use, it produced smoother copy and more consistent drops on detector scores. It also felt easier to test without stressing about monthly fees. I still edit heavily, but I spend less time fixing tone swings.

If your priorities are:

• client-safe text and clean tone, I would lean away from WriteHuman or accept a lot of manual editing.

• experimentation with detectors and cost control, I would start with Clever AI Humanizer and only pay for something like WriteHuman if you see a clear, repeatable gain for your specific detector setup.

Tried it for a month on client stuff and a couple of “test essays,” so here’s the blunt version.

Quality

For short bits of copy (taglines, 1–2 paragraph blurbs), WriteHuman was… fine. Not amazing, not unusable. It nudged the text away from that super-polished “AI sheen,” but it also introduced weird rhythm and occasional clunky phrasing. I saw the same tone whiplash @mikeappsreviewer and @caminantenocturno mentioned: one paragraph reads like a blog, the next like a policy memo. If you’re picky about voice, you’ll be rewriting a lot.

Originality

Plagiarism-wise, I did not see any blatant copying when I ran my before/after through standard plagiarism tools. So if your worry is “is it just stealing text,” that part looked ok for me. Where I slightly disagree with them is that I don’t think it adds much real originality either. It’s mostly a stylistic remix of what you feed it. If your base text is dull, it stays dull, just with different sentence structures.

AI detection

I tested it across a handful of detectors (including GPTZero). Same pattern they saw: sometimes scores dropped a bit, sometimes they did not budge, sometimes it even looked more AI-ish. If you’re hoping for “press button, magically human,” you’re setting yourself up for disappointment. Treat it as a rephraser, not a stealth cloak.

Pricing

This part annoyed me the most. The 80-request basic plan evaporates if you’re dealing with long-form content. No refunds plus no guarantee on any detector means you’re basically gambling on whether it will cooperate with your checker. For casual or hobby use, maybe acceptable. For heavy content production, value feels weak.

Data / privacy

Their “we can use your input for training” policy is a dealbreaker for anything even mildly sensitive. Client docs, resumes, internal notes, academic drafts you actually care about staying private… I would not touch WriteHuman with that stuff. That’s not paranoia, that’s just basic opsec.

Where it might make sense

- You already plan to edit heavily

- The text is non-sensitive

- You want a slightly different wording pass and don’t mind quirks

Where I’d skip it

- You’re under pressure to pass GPTZero or similar

- You need consistent tone for brand voice or academic work

- You’re on a tight budget and need reliable value, not “maybe it helps”

Alternative

If your main interest is AI-detection tinkering, I’d honestly spend time playing with Clever AI Humanizer first. In my tests it gave smoother copy and slightly more consistent drops on detector scores, and it’s easier to experiment with. It’s not magical either, but for “AI humanizer” specifically, Clever AI Humanizer feels more aligned with what people are actually trying to do here.

TL;DR: WriteHuman won’t ruin your text, but it also won’t save you from doing real editing, and it definitely won’t reliably save you from detectors. If you’re ok with that tradeoff and the data policy, it might be usable. If not, I’d look elsewhere or just edit by hand + maybe run things through something like Clever AI Humanizer as a supplement, not a crutch.